Sequential data — data that has time dependency — is very common in business, ranging from credit card transactions to medical healthcare records to stock market prices. But privacy regulations limit and dramatically slow-down access to useful data, essential to research and development. This creates a demand for highly representative, yet fully private, synthetic sequential … [Read more...] about Generating Synthetic Sequential Data Using GANs

Content Generation

8 AI Companies Generating Creative Advertising Content

AI Models Generated by Rosebud AI After the introduction of Generative Adversarial Networks (GANs) in 2014, a whole new era for AI image synthesis began. The latest GAN architectures can generate high-resolution, realistic, and colorful images that are almost impossible to distinguish from the real photographs. So, why spend time on exhausting and expensive photoshoots and … [Read more...] about 8 AI Companies Generating Creative Advertising Content

Generating New Faces With Variational Autoencoders

Introduction Deep generative models are gaining tremendous popularity, both in the industry as well as academic research. The idea of a computer program generating new human faces or new animals can be quite exciting. Deep generative models take a slightly different approach compared to supervised learning which we shall discuss very soon. This tutorial covers the basics … [Read more...] about Generating New Faces With Variational Autoencoders

4 Cutting-Edge AI Techniques for Video Generation

It is no secret that algorithms today can generate very realistic deepfakes - images or videos that are totally fake but very hard to distinguish from the real ones. You can make Mark Zuckerberg talking about “one man with total control of billions of people stolen data” and the suspicion will come only because Mark is not likely to say these exact words while the video … [Read more...] about 4 Cutting-Edge AI Techniques for Video Generation

OpenAI’s GPT-2: Results, Hype, and Controversies

The nonprofit AI research company, OpenAI, recently released a new language model, called GPT-2, which is capable of generating realistic texts in a wide range of styles. In fact, the company stated that the model is so good at automatic text generation that it can be used for nefarious purposes; therefore, it did not publicize the trained model. The dangerous-to-release … [Read more...] about OpenAI’s GPT-2: Results, Hype, and Controversies

How to Create a Fake Video of a Real Person

Recent advances in artificial intelligence have made creating convincing fake videos, or deepfakes, of real people possible. While the ethical implications and the creative capabilities of this amazing technology are only beginning to be explored, there are growing concerns that this technology could be used maliciously to ruin reputations and cause extensive damages. Can … [Read more...] about How to Create a Fake Video of a Real Person

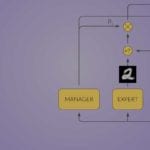

An In-Depth Guide To Generative Adversarial Networks (GANs)

Generative Adversarial Networks are a powerful class of neural networks with remarkable applications. They essentially consist of a system of two neural networks — the Generator and the Discriminator — dueling each other. Given a set of target samples, the Generator tries to produce samples that … [Read more...] about An In-Depth Guide To Generative Adversarial Networks (GANs)

Intuitively Understanding Variational Autoencoders

In contrast to the more standard uses of neural networks as regressors or classifiers, Variational Autoencoders (VAEs) are powerful generative models, now having applications as diverse as from generating fake human faces, to producing purely synthetic music. This post will explore what a VAE is, the intuition behind why it works so well, and its uses as a powerful … [Read more...] about Intuitively Understanding Variational Autoencoders

Mixture of Variational Autoencoders – a Fusion Between MoE and VAE

The Variational Autoencoder (VAE) is a paragon for neural networks that try to learn the shape of the input space. Once trained, the model can be used to generate new samples from the input space. If we have labels for our input data, it’s also possible to condition the generation process on the label. In the MNIST case, it means we can specify … [Read more...] about Mixture of Variational Autoencoders – a Fusion Between MoE and VAE

Variational Autoencoders Explained in Detail

In the previous post of this series I introduced the Variational Autoencoder (VAE) framework, and explained the theory behind it. In this post I’ll explain the VAE in more detail, or in other words – I’ll provide some code 🙂 After reading this post, you’ll understand the technical details needed to implement VAE. As a bonus point, I’ll show you how by … [Read more...] about Variational Autoencoders Explained in Detail